I am on the $99 per month plan, just renewed Feb 28. By March 3, it said I was out of quota and had to upgrade. My receipt # is 2222-1499

Is this about Kimi Code’s API running out of quota?

Thanks for your support! The $99/month Allegro plan should generally be quite durable for any individual user, so if you’re pushing its limits within just a few days, we truly appreciate you being such a power user!

Regarding the “out of quota” message, there are two different limits to check:

1. Throughput Capacity (5-hour rolling window)

If you’re seeing intermittent 429 errors that come and go, you’re likely hitting the throughput limit rather than your weekly cap. Kimi Code operates on a 5-hour time window that releases tokens for each user to prevent abusive burst traffic. As noted in our docs (https://www.kimi.com/code/docs/en/#key-advantages):

Throughput Capacity: A 5-hour token quota supports approximately 300–1,200 API calls, with a maximum concurrency of 30, ensuring uninterrupted operation for complex workloads.

I previously shared some tips in this forum thread about using the usage API (https://api.kimi.com/coding/v1/usages) to adaptively check your quota programmatically.

2. Weekly Quota

If you’re completely blocked with a hard “quota exceeded” message, you’ve likely consumed your weekly allowance (which resets every 7 days). Please check your Dashboard:

- Look at the Weekly usage indicator at the very top. If this shows 100% consumption after just 3 days of intensive use, you’re definitely a power user and we appreciate your heavy usage!

- Please also review the Usage History section at the bottom. Check if the timestamps and request volumes match your actual usage pattern. If you see suspicious activity (e.g., requests when you weren’t using the API, or unusually high token counts), this could indicate an API key leak. Please verify your API keys haven’t been compromised if the consumption pattern seems unusual.

Let us know what the Weekly usage meter and Usage History show (please mask the request id), and we may help investigate further!

I upgraded to the $199 plan. Now, when I upload 3 documents that I am working on (about 1400 pages) I ask 1 question and get the response that the conversation is too long. I have uploaded these exact same 3 documents in a different chat before and talked for weeks about it. Why is it suddenly saying the conversation is too long?

Thank you so much for upgrading to the $199 plan — we truly appreciate your support, and we’ll keep working hard to improve the models and product experience!

I want to clarify the context since you mentioned “upload”: are we discussing the Kimi Code (API/CLI) scenario, or the Chat/Agent (web interface or app) scenario?

If this is Chat or Agent (web interface or app):

Our conversation logic maintains the full context from the very first message throughout the entire chat (minus a few system helpers), up to the 256k token limit (262,144 tokens). This means every Q&A round accumulates — the documents plus all your subsequent discussion history counts toward the same context window.

Two things might have pushed you over the limit:

- Simply too many back-and-forth rounds accumulated over time

- Intermediate tool calls (like web searches) occasionally inject large amounts of information into the context

Maybe try starting a new chat, summarizing the key points from your previous discussion, re-uploading the 3 documents, and continuing from there to reset the context window.

If this is Kimi Code / CLI tools:

These tools usually have automatic compaction to manage context. Do you have the specific error message? The most common issue I’ve encountered is when MCP tools return extremely large outputs (e.g., massive search results or file reads), which can blow up the context window beyond the 256k token capacity in one shot.

Any additional details or error logs you can share would be greatly appreciated!

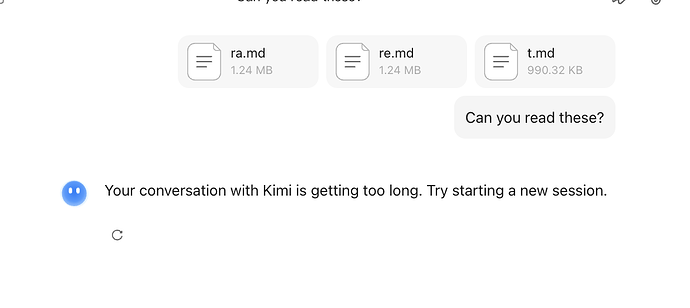

I opened a new chat, uploaded the exact same 3 docs I did in my prior chat, and I get the message the conversation is too long immediately. Here you can see.

Yes, that makes sense now. Those three markdown files add up to ~3.5MB of raw text. If you’re using K2.5 Thinking mode, the strategy is to load the entire content into the context window before starting the conversation. Our 256k token limit roughly equates to around 500k characters of raw text capacity (varies based on content), so 3.5MB would far exceed that limit.

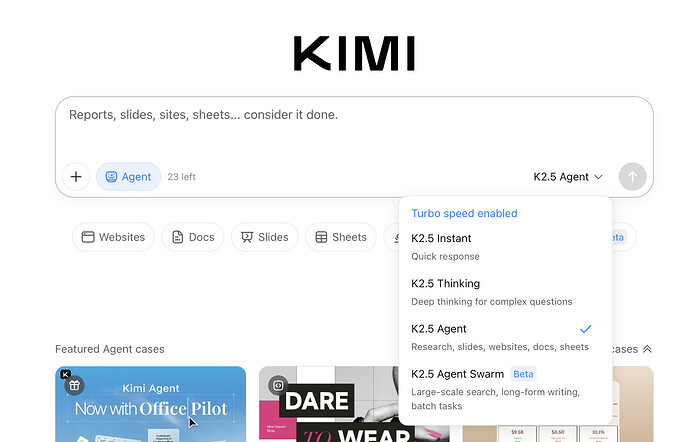

I understand now — you were likely using K2.5 Agent mode in your previous successful conversation. Agent mode spins up a computer environment that incrementally processes large documents by compressing and paging through them on-demand, rather than loading everything into context at once. This allows you to discuss large documents without hitting the context limit.

Maybe you can start a new chat, select K2.5 Agent from the dropdown (as shown above), upload your three documents, and then begin your discussion. This should handle the large file sizes properly.

Thanks again for your support!